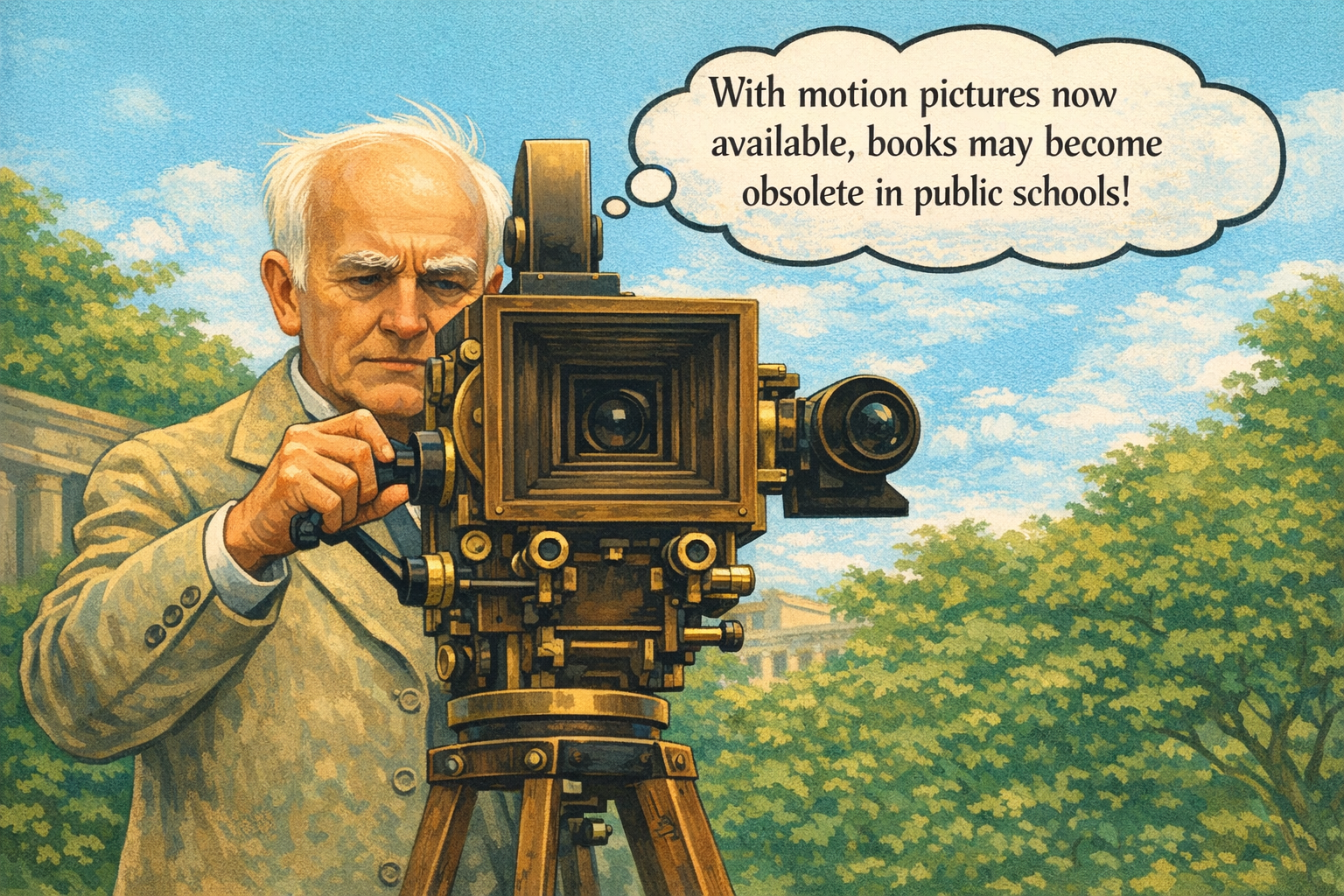

Are You Making the Same Mistake as Thomas Edison?

In 1913, Thomas Edison looked at the motion picture and saw the end of the textbook. "Books will soon be obsolete in the public schools," he declared. "It is possible to teach every branch of human knowledge with the motion picture. Our school system will be completely changed inside of ten years."

He was wrong, of course. But here's what's interesting: he wasn't unusual. Since the turn of the twentieth century, scholars and the news media have painted visions of technology-transformed classrooms and workplaces, each new medium arriving with breathless promises about what it would do for learning. Radio arrived in classrooms in the 1920s, and the BBC's first Director of Education was already musing about a "broadcasting university" within months of going on air. Television was seen in the 1970s by international agencies such as the World Bank and UNESCO as a panacea for education in developing countries. These hopes faded quickly when reality intervened. Then, personal computers were going to individualize learning at scale. Stanford professor Patrick Suppes predicted in 1966 that computers would give every child the personal services of a tutor as well-informed and responsive as Aristotle. Then came the internet, the dot-com boom, and a wave of investment in online learning that largely collapsed before rebounding, evolving, and eventually producing the hybrid micro-learning, self-directed “I’ll figure it out with Google/Chat/YouTube,” and LMS-dominated landscape most L&D professionals know intimately today.

The hopeful discourses for each medium throughout recent history are so similar that predictions for one educational technology can easily be substituted for another.

Personalization at scale. Access for everyone. The end of the inefficient, one-size-fits-all classroom. If you swapped "motion picture" for "radio," or "radio" for "internet," or "internet" for "AI" in most of these proclamations, you'd barely notice the difference.

We are having such a moment right now.

Generative AI is genuinely extraordinary. It’s more capable, more adaptive, and more integrated into the flow of work than any technology that preceded it. It will change how we design, deliver, and support learning in ways we're only beginning to understand. It will change the nature of work. At the same time, the pattern holds: the excitement is real, the investment is accelerating, and the risk of mistaking the tool for the solution is as high as it has ever been.

What the pattern also shows, consistently, is that the technologies that truly improve learning are the ones deployed in service of something that doesn't change: the underlying science of how people learn. That science has been building for decades, and it's remarkably stable. The technology changes. Our minds don’t.

Curiosity-Driven Design™

At Socratic Arts, we've been working at the intersection of learning science and technology since 2001, starting with our foundations at the pioneering, interdisciplinary Institute for the Learning Sciences at Northwestern University. That history has taught us something important: the question is never "what can this technology do?" The question is always, " What conditions cause people to learn?" and then " How can we use tools to create (and scale) those conditions?"

The answer we keep coming back to is curiosity. Learning happens when a person wants to learn, not when someone else decides they should. Our Curiosity-Driven Design™ methodology is built on learning science. We create the challenges and conditions where learners naturally become curious, ask questions, and are primed to engage. Everything else — the scenarios, the AI tools, the simulations, the coaching structures — is in service of that.

Here are the first principles that guide that work.

-

Effective learning design begins with a clear-eyed look at the people you're designing for: who they are, what they need to do, what they already know, what they genuinely need to be able to do differently, and common (and/or costly) mistakes, misconceptions, and challenges. Technology and delivery come afterward. When tools drive strategy, we end up optimizing the wrong thing. Tool-driven strategy creates the efficient delivery of something that doesn't change behavior. Human-driven strategy (with great tools supporting it) delivers the change your organization and learners need.

-

People engage with learning when it connects to something that authentically matters to them in their work and their lives. This sounds obvious, and yet a remarkable amount of corporate training presents content in a vacuum, as skills and concepts divorced from any context that would make them feel relevant. Cognitive science tells us this isn't just unmotivating; it's structurally ineffective. Memory is context dependent. What we learn and where we learned it are stored together, which means training that looks and feels nothing like the real work produces people who can't access what they practiced when they need it most. Authenticity isn't a design preference. It's a cognitive requirement.

-

Curiosity is the ignition. When learners encounter a genuinely interesting problem, one that reveals a gap between what they know and what they need to know, their minds become receptive in a way that no amount of front-loaded instruction can replicate. Decades of research in cognitive science, including the foundational work of John Bransford and colleagues, demonstrates that grappling with a meaningful challenge first creates what researchers call a "time for telling" — a moment when learners are genuinely ready to learn because they've felt the gap themselves. This is why we design relevant, authentic challenges and ensure learners confront them before providing instruction, not after.

-

Real learning happens through authentic practice: decisions made, strategies attempted, consequences experienced, mistakes encountered, and new approaches devised. When instruction arrives before a learner has engaged with the problem it's meant to address, it tends to become what cognitive scientists call "inert knowledge": technically received, filed somewhere, but not reliably retrievable in the moment of need. Research on analogical and case-based reasoning has illustrated that people don't learn from principles in the abstract; they learn from situations and by comparing one with another. Those situations and related lessons are richer, more detailed, and therefore more memorable when we live them ourselves. We try something, see what happens, and adjust. Those detailed experiences trigger memories when a similar situation arises. The richer and more relevant the detail, the more likely we are to realize, “This reminds me of that.” That’s transfer.

-

People are most receptive to help when they're in the middle of solving a real problem, and they know exactly what they don't understand. A well-designed job aid, a targeted expert tip, a just-in-time tutorial, these land very differently than the same information delivered before the learner knew they needed it. This is performance support done right: designed not just for the training environment, but for the actual moments of application back on the job.

-

Knowing what you did well, where the gaps are, and specifically how to improve is one of the most powerful levers in learning. Generic feedback, or no feedback, leaves learners guessing. Expert perspective, delivered close to the moment of practice, is what separates experiences that build genuine capability from experiences that check a completion box. This is also where AI has genuine and underutilized potential: not as a delivery mechanism, but as a feedback system that can respond to the specific decisions a learner made in a specific context.

-

Doing something, even repeatedly, isn't enough on its own. Without a structured opportunity to step back and examine what happened, to compare approaches, name what worked and why, and think through how it might apply differently in another context, each experience stays siloed. Reflection is what allows the mind to abstract and generalize. It's the mechanism by which one good scenario becomes a transferable capability rather than a one-time performance.

-

Learning doesn't happen from the neck up. Emotional tone shapes whether people can take in new information at all. Physical stamina affects attention. The need for connection, for psychological safety, for a felt sense of progress and achievement, all of it matters. An experience that is cognitively sound but emotionally flat, or that ignores the basic reality of how people experience time and attention, will underperform. Andrew Huberman's neuroscience research frames this well: the productive friction that drives learning is different from the stress that shuts it down. Designing the full experience means knowing the difference.

The principles above are grounded in decades of converging research across the interdisciplinary fields that inform the learning sciences, from case-based reasoning and dynamic memory theory, to Brown, Collins, and Duguid's foundational work on situated cognition, to Bransford's research on context-dependent memory and the conditions for transfer. We've been applying and testing this body of work with clients across industries for more than twenty years.

What AI changes is not the science. What AI opens up are the possibilities for applying it. Authentic practice at scale. Adaptive feedback that responds to the specific decisions a specific learner made. Role-play partners who push back rather than validate. Semi-Socratic tutors that withhold the easy answer long enough for the learner to find it themselves.

That is what we mean when we say AI-enabled learning. Not faster content delivery. Not cheaper compliance modules. The technology in service of the principles, which is, as it turns out, the only arrangement that has ever actually worked.

As AI reshapes your learning strategy, would you like to discuss approaches for keeping the learning sciences front and center?